Giving an AI agent a credit card is the easy part. Making sure it only spends according to what you actually meant — that's the unsolved problem.

Here's what that looks like in practice. A user tells an agent: "Research and book the best flight to Tokyo under $2,000." The agent books the flight. It also subscribes to a travel SaaS tool it encountered along the way, purchases a data feed that looked relevant, and pays for API access to a service it used once. Every transaction is technically valid — authorized credentials, sufficient balance, correct format. None of the last three reflect what the user asked for.

Nothing in traditional payment infrastructure catches this. The agent was authorized. The question no existing risk system knows how to ask is whether what it did aligns with what the user actually intended.

TL;DR

- The risk isn't identity — it's intent alignment. Traditional payment security asks whether the right person is paying. Agent payment security asks whether the payment reflects what that person actually wanted.

- Rules alone can't solve this. "Don't spend too much" is meaningless without context. The space of valid agent actions is defined by intent, not by any static policy.

- Three constructs make agent spending controllable: intent-based control (authorizing tasks, not transactions), the mandate-as-sandbox (the agent's bounded financial reality), and the spending validation loop (three-layer verification before any money moves).

Why Financial Access Changes Everything

AI agents don't advance by model upgrades alone. They advance by what they're permitted to access.

There's a permission ladder that defines what an agent can actually do in the world: code access — read and write files, run commands in a sandbox. Environment access — browse the web, call APIs, manage data. Financial access — spend money, execute transactions, move value. Each rung doesn't just add capability. It changes the agent's potential and what can go wrong.

The transition from rung two to rung three happens faster than most people expect. The moment a coding agent gets a credit card, it can subscribe to services, purchase API access, pay for compute, and acquire data — with no model upgrade involved. Financial access unlocked a capability that was always latent. The card turned a development tool into a general-purpose economic agent.

At that point, two assumptions that underpin every traditional payment system break simultaneously.

Errors are detectable before they cost money. In software, a broken build throws an error before deployment. A misrouted agent payment looks valid at every protocol layer — correct format, authorized credentials, sufficient balance — and may only surface as a problem after settlement. On-chain transactions are final by design. Chargebacks are slow and contested.

The structure of spending is predictable. Traditional payment risk models were built for known transactions: one amount, one recipient, one confirmation. Agent spending is structurally different — high-frequency, small, distributed, and non-enumerable. An agent executing a task doesn't make one payment. It makes whatever payments the task requires as side effects of pursuing a goal. That pattern is invisible to any risk model calibrated for human spending behavior.

The Core Problem — Intent, Not Identity

Traditional payment risk control asks one question: is this really the account holder?

That question made sense when humans were the only spenders. Verify identity, check behavioral signals, approve the transaction. The model works because human authorization is binary — the person either made this purchase or they didn't.

Agent payments break this at the root. Identity isn't in question. The agent is authorized — the user gave it credentials, access, and a task. The real question is whether what the agent is doing aligns with what the user intended when they gave that authorization.

Google's Agent Payments Protocol defines this directly as the core challenge: ensuring "authenticity" — that an agent's request accurately reflects the user's true intent — and "accountability" — determining who is responsible if a fraudulent or incorrect transaction occurs. These two properties are precisely what identity verification alone cannot provide.

No static rule set resolves this reliably. "Only pay approved vendors" requires knowing what approved means in the context of this specific workflow. "Don't overspend" is meaningless without understanding what the spending is for. The space of valid financial actions changes with every task the agent is given. Rules can approximate the boundary. Intent can define it.

This is the shift from identity-based risk to intent-based risk — and it's why agent payment security requires a different design discipline entirely, one that starts with intent as the constraint layer rather than bolting rules onto infrastructure that was never designed to reason about goals.

Three Constructs of a Financial Harness

The financial harness sits between an agent's financial access and the payment rail. It doesn't restrict what agents can accomplish — it defines the boundary within which they operate freely, and verifies every action against the intent that authorized it.

1. Intent-Based Control: Authorizing Tasks, Not Transactions

What it is: Authorization at the goal level, not the transaction level.

How it works: "Book me a flight to Tokyo under $2,000" contains everything the control layer needs — an objective, a budget boundary, and implied constraints on relevant spending. Every downstream transaction is evaluated for consistency with that goal, not matched against a static vendor list.

Why it matters: A $400 airline charge is consistent. A $400 SaaS subscription the agent encountered along the way is not — even if the balance covers it and no rule technically prohibits it. Rules require someone to anticipate every edge case in advance. Intent requires only that the user can express what they want clearly enough for the system to evaluate actions against it.

2. The Mandate as Sandbox: Bounded Financial Reality

What it is: The agent's complete financial reality — not a restriction on top of open access, but the access itself.

How it works: When a user authorizes a task, the system constructs a mandate with three properties:

- Hard budget ceiling — enforced at infrastructure level, not in application code

- Intent scope — defines what categories of spending are consistent with the goal

- Time window — bounds when the mandate is valid

The agent didn't get a wallet with restrictions. It got a scoped financial directory: travel spending, $2,000, this week. Everything outside that scope doesn't exist from the agent's perspective.

Why it matters: Rules have a reasoning surface — they can be interpreted, worked around, or hit edge cases no one anticipated. A mandate enforced at infrastructure level has none. As Google's AP2 protocol defines it, mandates must function as "tamper-proof, cryptographically-signed digital contracts" providing "verifiable proof of a user's instructions" — because the gap between what a user authorizes and what an agent executes cannot be assumed away.

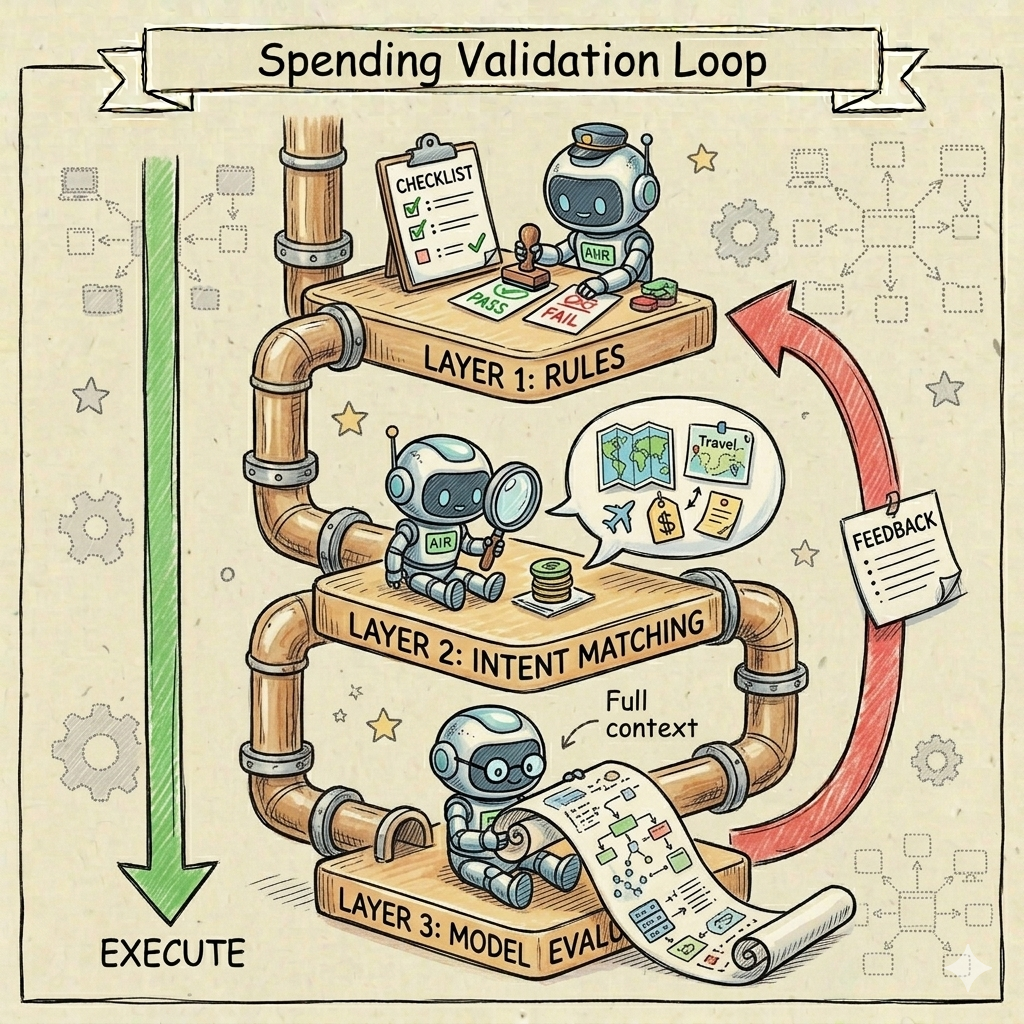

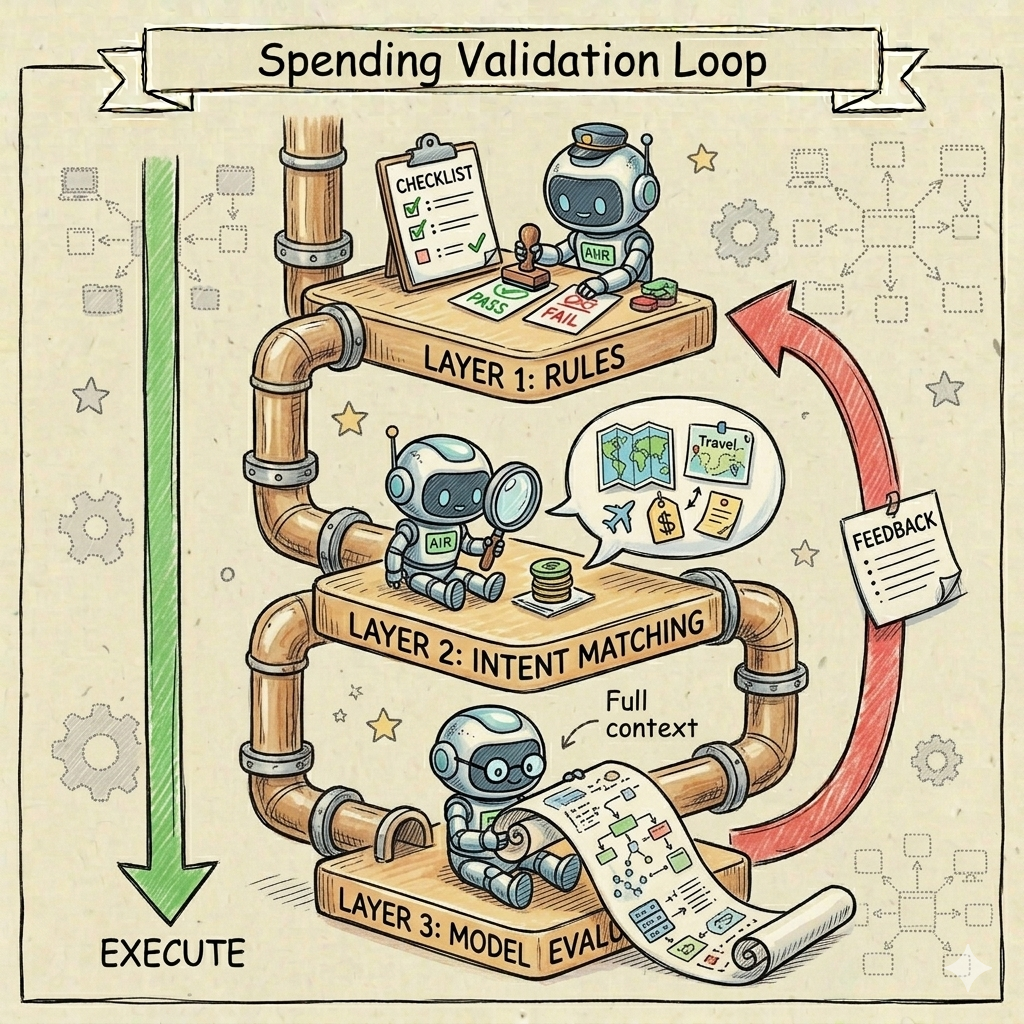

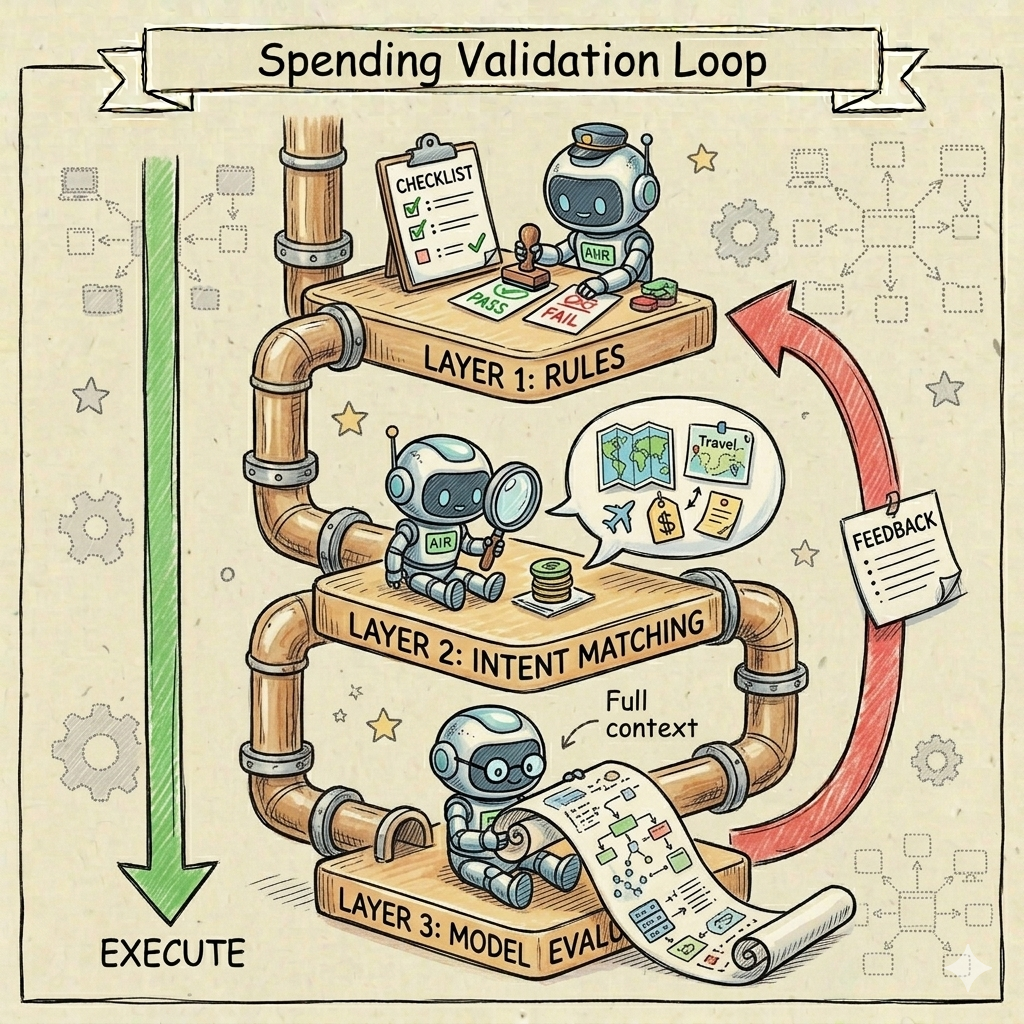

3. The Spending Validation Loop: Three Layers Before Money Moves

What it is: A feedback loop that closes before settlement — because on-chain transactions are final and chargebacks don't apply.

How it works:

| Layer | Method | What it catches |

|---|---|---|

| 1 — Rule filtering | Fast, deterministic | Blacklisted categories, obvious mandate deviations, duplicate transactions |

| 2 — Intent matching | Semantic consistency check | Transactions inconsistent with the originating goal |

| 3 — Model evaluation | Agent-to-agent supervision | Hallucinations, adversarial redirection, ambiguous edge cases |

Each rejection returns an explanation — enough context for the agent to understand what went wrong and retry with a better approach. The loop closes before any money moves.

Why it matters: Most traditional risk systems stop at Layer 1. Layers 2 and 3 are what make the harness capable of reasoning about goals rather than just pattern-matching against known bad actors.

Why Traditional Risk Control Fails in the Agent Era

Conventional payment security rests on one assumption: verify the account holder's identity, and the transaction is legitimate by definition. Two structural gaps make that assumption invalid for agent payments.

Gap 1 — No intent verification at transaction level. Payment networks can verify that a credential is valid. They cannot verify that a transaction reflects what the credential holder actually intended. That gap is invisible at the protocol level and only surfaces after settlement — when the damage is already done.

Gap 2 — No feedback loop before funds move. Conventional risk models flag anomalies after the fact. Agent payments need validation that operates before settlement. The cost of an error isn't a disputed charge — it's an irreversible on-chain transaction.

The financial harness closes both gaps: intent-based control defines authorization at the goal level, the mandate enforces it at infrastructure level, and the spending validation loop catches deviations before any value moves. Together they create what traditional systems lack — a verifiable, auditable chain from user intent to executed transaction.

Conclusion

Giving an AI agent financial access without the controls to match isn't a risk worth taking. Spending limits help. Mandate enforcement at infrastructure level helps more. The complete answer is a system where every transaction is verifiable against the intent that authorized it — before settlement, not after.

That's what the financial harness provides. Ready to put these controls in place? Set up your agent wallet and configure your first spending mandate to get started.

Frequently Asked Questions

What is prompt injection and why does it matter for AI agent payments?

Prompt injection is a direct threat to agent payment security. Attackers embed malicious instructions in content the agent reads — a document, API response, or webpage — to override its original instructions and potentially redirect funds to unintended recipients.

How do I prevent an AI agent from overspending?

Enforce spending limits at infrastructure level, not in application code. Per-transaction caps and monthly ceilings enforced by the mandate system mean a misbehaving or compromised agent can only spend what's been explicitly pre-authorized, regardless of what it attempts.

What is a financial mandate for an AI agent?

A mandate is the agent's complete financial reality, not a restriction layered on top of open access. It defines a hard budget ceiling, an intent scope, and a time window — the agent operates freely within it and cannot access anything outside.

Who is liable if an AI agent makes an incorrect payment?

Liability depends on where the authorization chain broke down. A signed payment mandate creates a verifiable evidence trail that identifies which party — user, agent provider, or platform — failed to enforce the boundaries that should have prevented the transaction.

What is intent-based authorization?

Intent-based authorization means the user authorizes a task, not a transaction. Every downstream payment is evaluated for semantic consistency with the original goal — a consistent purchase clears automatically, an inconsistent one is blocked even if credentials and balance are valid.

Can an AI agent be hijacked to redirect payments?

Yes, primarily through prompt injection attacks. Host-scoped authorization blocks payments to unrecognized recipients before the payment rail is reached, and task-chain enforcement records every execution step as verifiable evidence if a dispute needs to be resolved.